AI Coding Assistants Boost Speed but Lower Skill Mastery in New Study

Published Cached

AI can cut task time up to 80% but may reduce engagement Prior observational work showed AI tools like Claude.ai can accelerate parts of work by as much as 80%, yet separate studies found users become less engaged with their work and offload thinking to the AI, potentially undermining effort. [3][5][6]

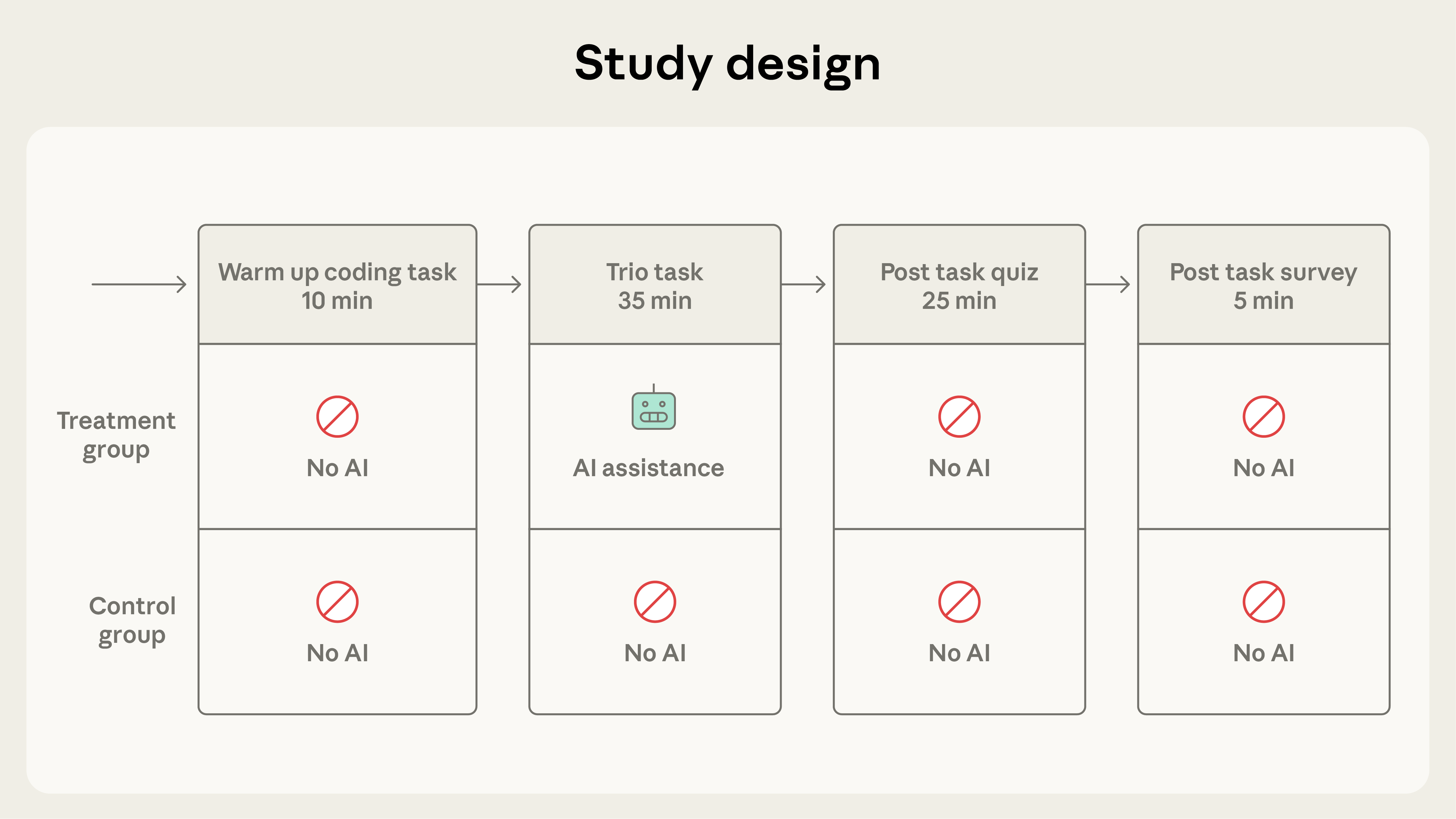

Randomized trial finds 17% lower quiz scores with AI help In a controlled experiment with 52 mostly junior Python developers learning the Trio library, participants who used AI assistance scored 50% on a post‑task quiz versus 67% for those who coded manually, a 17‑percentage‑point drop equivalent to nearly two letter grades. [1]

AI assistance yields modest, non‑significant speed gain The AI group completed the coding tasks on average two minutes faster than the hand‑coding group, but the difference did not reach statistical significance, suggesting limited productivity benefit for novel learning tasks. [1]

Interaction style predicts learning outcomes High‑scoring participants combined code generation with follow‑up conceptual queries or hybrid code‑explanation requests, while low‑scoring participants either delegated all coding to the AI or relied heavily on AI for debugging, leading to quiz scores below 40%. [1]

Debugging ability suffers most from AI reliance The biggest performance gap between groups occurred on debugging questions, indicating that AI use may particularly impair developers’ capacity to identify and diagnose code errors. [1]

Study warns of skill trade‑offs as AI scales in workplaces Researchers conclude that aggressive deployment of AI coding tools could stunt junior engineers’ skill development, especially in error detection, and advise managers to design AI integrations that preserve intentional learning and oversight. [1]

Links

- [1] https://www.anthropic.com/research/AI-assistance-coding-skills

- [10] https://arxiv.org/abs/2302.06590

- [11] https://arxiv.org/abs/2507.09089

- [12] https://www.anthropic.com/research/estimating-productivity-gains

- [13] https://arxiv.org/abs/2601.20245

- [2] https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4945566

- [3] https://www.anthropic.com/research/estimating-productivity-gains

- [4] http://claude.ai/redirect/website.v1.b23a11d4-c9ba-434c-83eb-082f855a0d87

- [5] https://www.nature.com/articles/s41598-025-98385-2

- [6] https://www.microsoft.com/en-us/research/wp-content/uploads/2025/01/lee_2025_ai_critical_thinking_survey.pdf

- [7] https://ieeexplore.ieee.org/document/9962584

- [8] https://code.claude.com/docs/en/output-styles

- [9] https://openai.com/index/chatgpt-study-mode/